multipath-多路径软件

更新于:2023年12月6日

multipath-多路径软件

目录

[toc]

1、什么是multipath

multipath是linux系统中用于实现设备路径冗余和负载均衡的一种机制。通过multipath机制,能够实现在系统中同一个设备可以存在多条路径,并且可以通过系统内部自己实现的算法,实现这些路径上的读写或访问负载均衡。同时,如果某些路径出现故障,系统也可以自动切换到其他可用的路径上。这样,可以提高系统的可靠性以及存储的效率。

2、multipath配置文件

要配置multipath,需要使用multipath.conf配置文件。该文件位于/etc/multipath.conf中。该文件中包含了一些关于multipath的基本参数设置,同时还包含了一些不同存储设备的类型信息以及对应的路径优先级,这些参数可以通过修改multipath.conf来实现。

demo1(最小化配置)

1[root@docker ~]#cat /etc/multipath.conf

2defaults {

3 user_friendly_names yes

4 find_multipaths yes

5}

demo2

下面是multipath.conf文件的一个示例:

1defaults {

2 user_friendly_names yes

3 find_multipaths yes

4 path_grouping_policy group_by_prio

5 path_selector "round-robin 0"

6 failback immediate

7 rr_min_io 100

8}

9

10blacklist {

11 devnode "^(ram|raw|loop|fd|md|dm-|sr|scd|st)[0-9]*"

12 devnode "^hd[a-z][[0-9]*"

13 devnode "^cciss!c[0-9]*d[0-9]*"

14 devnode "^ssv-(.*)(zer|tic)"

15}

16

17blacklist_exceptions {

18 wwid ".*"

19}

20

21devices {

22 device {

23 vendor "NETAPP"

24 product "LUN"

25 path_grouping_policy group_by_prio

26 prio "alua"

27 features "1 queue_if_no_path"

28 hardware_handler "1 alua"

29 }

30}

可以看到,multipath.conf文件包括了以下几个部分:

- defaults: 这个部分包含了一些默认设置,如user_friendly_names(指是否启用友好设备名称)、find_multipaths(指是否查找多路径)等。

- blacklist: 这个部分包含了需要屏蔽的设备类型或名称。在上面的示例中,会屏蔽一些设备类型和名称,如ram、raw、loop、fd等。

- blacklist_exceptions: 这个部分包含了不需要屏蔽的设备类型或名称。在上面的示例中,所有的设备都不需要屏蔽。

- devices: 这个部分包含了需要配置的设备信息。在上面的示例中,我们定义了一个设备,并指定了它的厂商、产品名称以及一些其他参数。

demo3

1blacklist {

2 wwid 3600508b1001c044c39717726236c68d5

3}

4

5defaults {

6 user_friendly_names yes

7 polling_interval 10

8 queue_without_daemon no

9 flush_on_last_del yes

10 checker_timeout 120

11}

12

13

14devices {

15 device {

16 vendor "3par8400"

17 product "HP"

18 path_grouping_policy asmdisk

19 no_path_retry 30

20 prio hp_sw

21 path_checker tur

22 path_selector "round-robin 0"

23 hardware_handler "0"

24 failback 15

25 }

26}

27

28

29multipaths {

30 multipath {

31 wwid 360002ac0000000000000000300023867

32 alias mpathdisk01

33 }

34}

35

36如果有两个或者多个就再加一条即可。

37multipaths {

38 multipath {

39 wwid 360002ac0000000000000000400023867

40 alias mpathdisk02

41 }

42}

字段解析

这段代码是一个 multipath.conf 配置文件,用于配置 Linux 操作系统中的多路径设备。以下是每个字段的含义:

blacklist:定义了一些被禁用的设备,只要 WWID 匹配了列表中的任何一个,它就会被黑名单所拒绝。 wwid:唯一标识多路径设备的 32 位十六进制字符串。 defaults:定义了一些默认设置,这些设置可以在其他部分被重写。 user_friendly_names:使多路径设备更易于理解和使用。 polling_interval:检查路径状态的频率(以秒为单位)。 queue_without_daemon:定义了当 multipathd 守护程序处于未运行状态时处理 I/O 请求的行为。 flush_on_last_del:在删除最后一个路径时是否刷新 IO 缓存。 checker_timeout:指定检查器超时的时间。 devices:包含一个或多个 device 块,每个块都描述了一个特定的多路径设备。 device:描述了一个多路径设备及其属性。 vendor、product:设备的制造商和产品名称。 path_grouping_policy:指定将路径分组到哪个组中。 no_path_retry:当无法访问某个路径时进行重试的次数。 prio:指定优先级算法,如 alua、emc、hp_sw 等。 path_checker:指定 IO 路径检查器的类型。 path_selector:指定选择路径的算法。例如,“round-robin 0” 表示依次将请求分发到每个路径上。 hardware_handler:指定用于处理硬件错误的脚本或程序。 failback:指定多长时间后进行故障切换。 multipaths:包含一个或多个 multipath 块,每个块都描述了一个设备的多个路径。 alias:为指定的多路径设备定义别名。

prio 是 multipath.conf 配置文件中的一个关键字,表示优先级算法。它可以指定多路径设备使用哪种算法来选择 I/O 请求路径。例如:

prio ==alua==

以上配置指定了使用 Asymmetric Logical Unit Access(ALUA) 算法进行路径选择。这个算法主要用于 SAN 存储环境下,能够更好地处理存储阵列并发访问的问题。

除了 ALUA,还有其他一些可用的优先级算法,如: emc:用于与 EMC 存储阵列配合使用。 hp_sw:用于与 HP 存储阵列配合使用。 rdac:用于与 LSI 存储阵列配合使用。

如果没有指定 prio 设置,则默认为 const(优先选择第一个路径)算法,或者是上层应用程序自己控制路径选择。

3、命令

multipath工具提供了一些命令行命令来实现对设备路径的操作。以下是一些常用命令:

- multipath -ll: 查看多路径设备的信息。(常用)

- multipath -l: 查看当前活动路径的设备信息。

- multipath -F: 刷新multipath状态。(常用)

- multipath -f: 阻止设备出现在多路径设备列表中。

- multipath -r: 重新配置multipath。

除此之外,还有很多其他的命令可以使用。可以通过man multipath试图获取更多的信息。

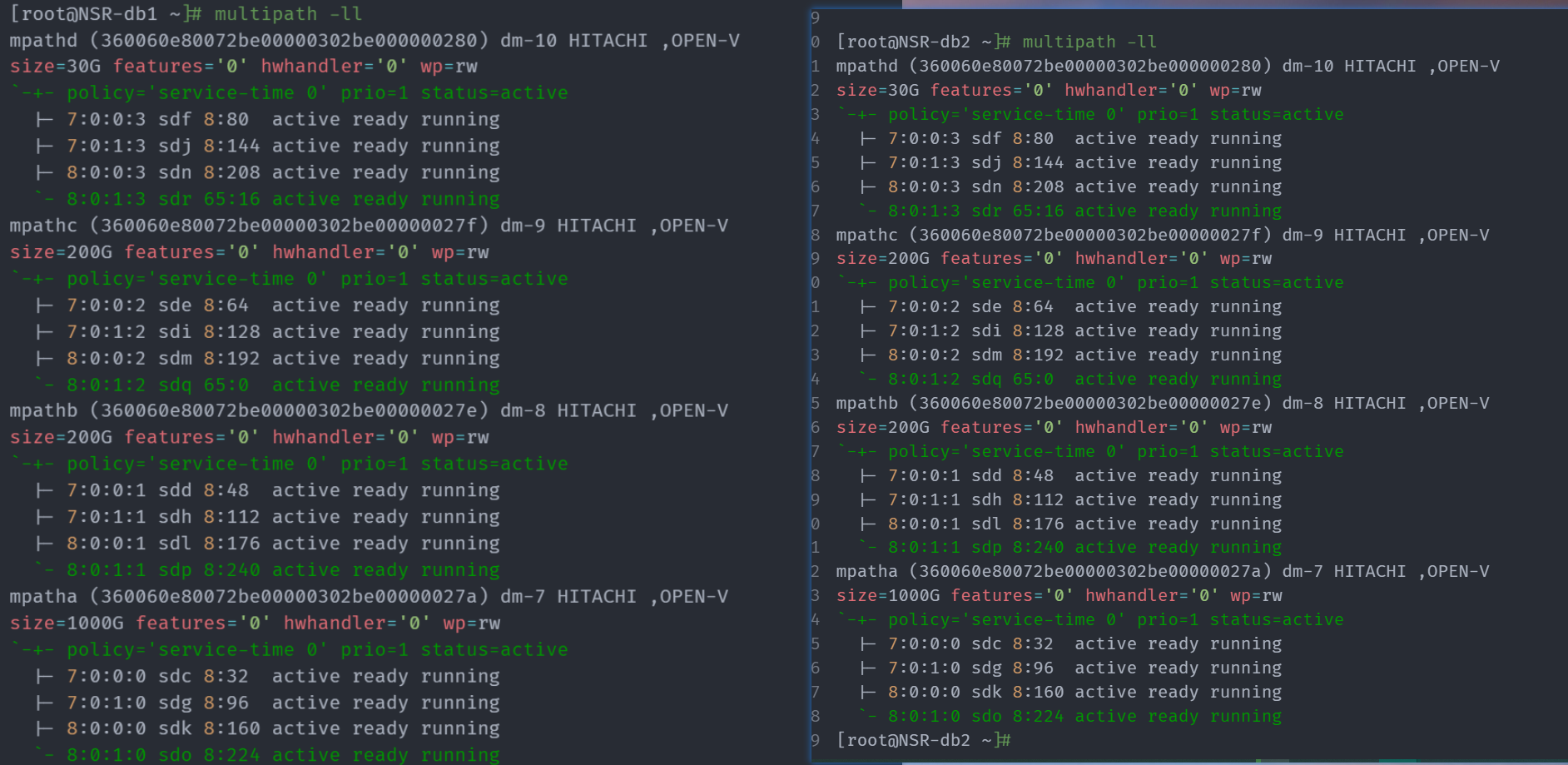

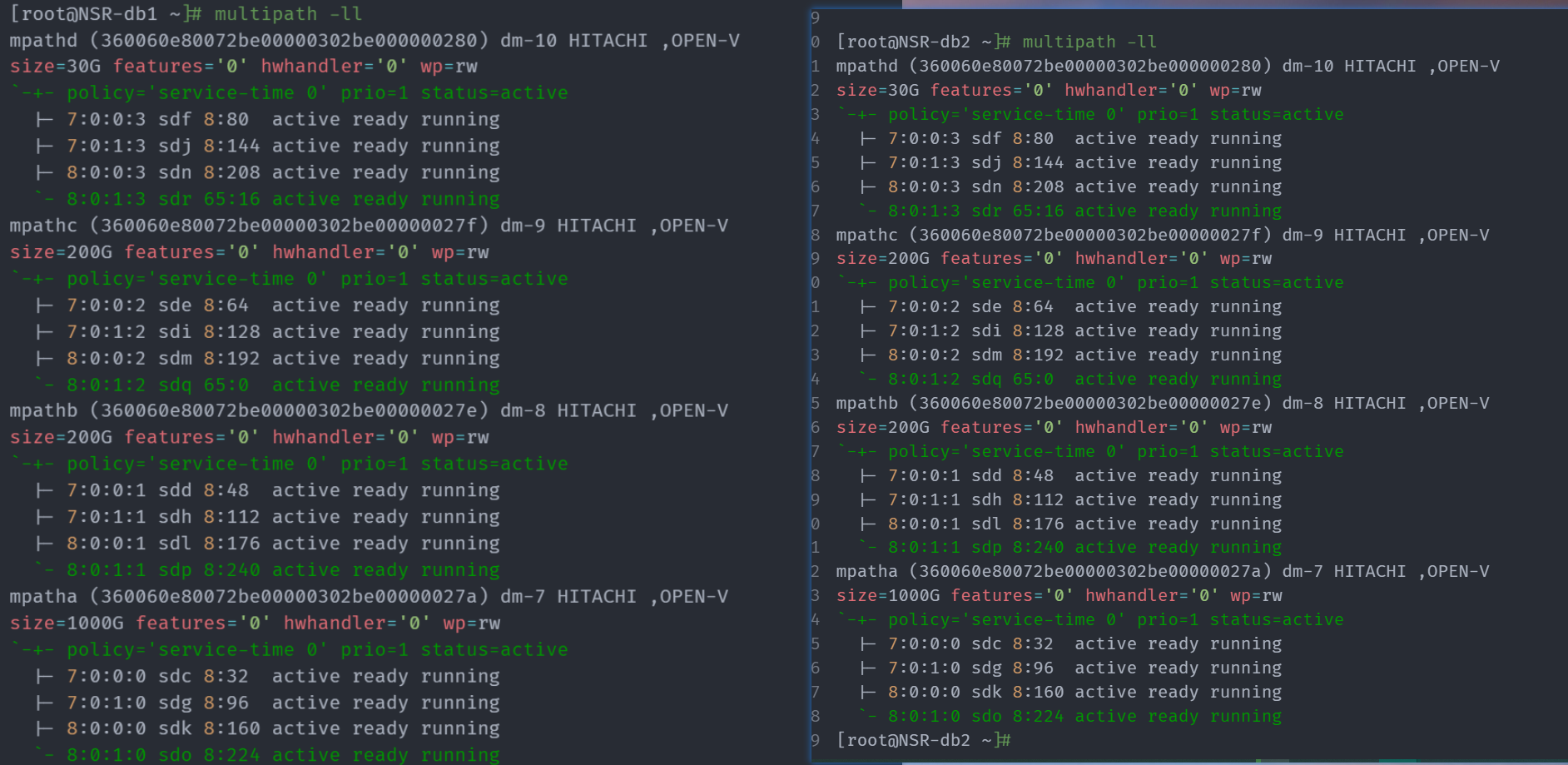

案例:查看多路径设备的信息。(常用)

用以下命令列出系统中所有的多路径设备及其 WWID:

1multipath -ll

这个命令将显示多路径设备的别名、WWID 和路径等信息。

1[root@NSR-db1 ~]# multipath -ll

2mpathd (360060e80072be00000302be000000280) dm-10 HITACHI ,OPEN-V

3size=30G features='0' hwhandler='0' wp=rw

4`-+- policy='service-time 0' prio=1 status=active

5 |- 7:0:0:3 sdf 8:80 active ready running

6 |- 7:0:1:3 sdj 8:144 active ready running

7 |- 8:0:0:3 sdn 8:208 active ready running

8 `- 8:0:1:3 sdr 65:16 active ready running

9mpathc (360060e80072be00000302be00000027f) dm-9 HITACHI ,OPEN-V

10size=200G features='0' hwhandler='0' wp=rw

11`-+- policy='service-time 0' prio=1 status=active

12 |- 7:0:0:2 sde 8:64 active ready running

13 |- 7:0:1:2 sdi 8:128 active ready running

14 |- 8:0:0:2 sdm 8:192 active ready running

15 `- 8:0:1:2 sdq 65:0 active ready running

16mpathb (360060e80072be00000302be00000027e) dm-8 HITACHI ,OPEN-V

17size=200G features='0' hwhandler='0' wp=rw

18`-+- policy='service-time 0' prio=1 status=active

19 |- 7:0:0:1 sdd 8:48 active ready running

20 |- 7:0:1:1 sdh 8:112 active ready running

21 |- 8:0:0:1 sdl 8:176 active ready running

22 `- 8:0:1:1 sdp 8:240 active ready running

23mpatha (360060e80072be00000302be00000027a) dm-7 HITACHI ,OPEN-V

24size=1000G features='0' hwhandler='0' wp=rw

25`-+- policy='service-time 0' prio=1 status=active

26 |- 7:0:0:0 sdc 8:32 active ready running

27 |- 7:0:1:0 sdg 8:96 active ready running

28 |- 8:0:0:0 sdk 8:160 active ready running

29 `- 8:0:1:0 sdo 8:224 active ready running

30[root@NSR-db1 ~]#

案例:刷新multipath状态

multipath -F: 刷新multipath状态。(常用)

1 -v lvl verbosity level

2 . 0 no output

3 . 1 print created devmap names only

4 . 2 default verbosity

5 . 3 print debug information

6

7#刷新

8multipath -F

9

10#刷新

11multipath -v2 -F

12multipath -v2

13

14#重新扫描设备 multipath -v3

15multipath -v3 -F

16multipath -v3

1[root@NSR-db1 ~]# multipath -F

2

3[root@NSR-db1 ~]# multipath -v2 -F

4[root@NSR-db1 ~]# multipath -v3 -F

5Dec 05 14:37:55 | set open fds limit to 1048576/1048576

6Dec 05 14:37:55 | loading /lib64/multipath/libcheckdirectio.so checker

7Dec 05 14:37:55 | loading /lib64/multipath/libprioconst.so prioritizer

8Dec 05 14:37:55 | unloading const prioritizer

9Dec 05 14:37:55 | unloading directio checker

10[root@NSR-db1 ~]#

案例:-v2/-v3 打印信息

1 -v lvl verbosity level

2 . 0 no output

3 . 1 print created devmap names only

4 . 2 default verbosity

5 . 3 print debug information

1[root@NSR-db1 ~]# multipath -v2

2create: mpatha (360060e80072be00000302be00000027a) undef HITACHI ,OPEN-V

3size=1000G features='0' hwhandler='0' wp=undef

4`-+- policy='service-time 0' prio=1 status=undef

5 |- 7:0:0:0 sdc 8:32 undef ready running

6 |- 7:0:1:0 sdg 8:96 undef ready running

7 |- 8:0:0:0 sdk 8:160 undef ready running

8 `- 8:0:1:0 sdo 8:224 undef ready running

9create: mpathb (360060e80072be00000302be00000027e) undef HITACHI ,OPEN-V

10size=200G features='0' hwhandler='0' wp=undef

11`-+- policy='service-time 0' prio=1 status=undef

12 |- 7:0:0:1 sdd 8:48 undef ready running

13 |- 7:0:1:1 sdh 8:112 undef ready running

14 |- 8:0:0:1 sdl 8:176 undef ready running

15 `- 8:0:1:1 sdp 8:240 undef ready running

16create: mpathc (360060e80072be00000302be00000027f) undef HITACHI ,OPEN-V

17size=200G features='0' hwhandler='0' wp=undef

18`-+- policy='service-time 0' prio=1 status=undef

19 |- 7:0:0:2 sde 8:64 undef ready running

20 |- 7:0:1:2 sdi 8:128 undef ready running

21 |- 8:0:0:2 sdm 8:192 undef ready running

22 `- 8:0:1:2 sdq 65:0 undef ready running

23create: mpathd (360060e80072be00000302be000000280) undef HITACHI ,OPEN-V

24size=30G features='0' hwhandler='0' wp=undef

25`-+- policy='service-time 0' prio=1 status=undef

26 |- 7:0:0:3 sdf 8:80 undef ready running

27 |- 7:0:1:3 sdj 8:144 undef ready running

28 |- 8:0:0:3 sdn 8:208 undef ready running

29 `- 8:0:1:3 sdr 65:16 undef ready running

30[root@NSR-db1 ~]#

31

32

33[root@NSR-db1 ~]# multipath -v3

34Dec 05 14:39:09 | set open fds limit to 1048576/1048576

35Dec 05 14:39:09 | loading /lib64/multipath/libcheckdirectio.so checker

36Dec 05 14:39:09 | loading /lib64/multipath/libprioconst.so prioritizer

37Dec 05 14:39:09 | sda: not found in pathvec

38Dec 05 14:39:09 | sda: mask = 0x3f

39Dec 05 14:39:09 | sda: dev_t = 8:0

40Dec 05 14:39:09 | sda: size = 937406464

41Dec 05 14:39:09 | sda: vendor = PM8060-

42Dec 05 14:39:09 | sda: product = 1

43Dec 05 14:39:09 | sda: rev = V1.0

44Dec 05 14:39:09 | sda: h:b:t:l = 0:0:0:0

45Dec 05 14:39:09 | sda: path state = running

46

47Dec 05 14:39:09 | sda: 58350 cyl, 255 heads, 63 sectors/track, start at 0

48Dec 05 14:39:09 | sda: serial = E274D1D3

49Dec 05 14:39:09 | sda: get_state

50Dec 05 14:39:09 | sda: detect_checker = 1 (config file default)

51Dec 05 14:39:09 | sda: path checker = directio (internal default)

52Dec 05 14:39:09 | sda: checker timeout = 45000 ms (sysfs setting)

53Dec 05 14:39:09 | directio: starting new request

54Dec 05 14:39:09 | directio: io finished 4096/0

55Dec 05 14:39:09 | sda: directio state = up

56Dec 05 14:39:09 | sda: uid_attribute = ID_SERIAL (internal default)

57Dec 05 14:39:09 | sda: uid = 2d3d174e200d00000 (udev)

58Dec 05 14:39:09 | sda: detect_prio = 1 (config file default)

59Dec 05 14:39:09 | sda: prio = const (internal default)

60Dec 05 14:39:09 | sda: prio args = (internal default)

61Dec 05 14:39:09 | sda: const prio = 1

62Dec 05 14:39:09 | sdb: not found in pathvec

63Dec 05 14:39:09 | sdb: mask = 0x3f

64Dec 05 14:39:09 | sdb: dev_t = 8:16

65Dec 05 14:39:09 | sdb: size = 4676648960

66Dec 05 14:39:09 | sdb: vendor = PM8060-

67Dec 05 14:39:09 | sdb: product = raid10

68Dec 05 14:39:09 | sdb: rev = V1.0

69Dec 05 14:39:09 | sdb: h:b:t:l = 0:0:1:0

70Dec 05 14:39:09 | sdb: path state = running

案例:查看当前活动路径的设备信息

multipath -l: 查看当前活动路径的设备信息。

1[root@NSR-db1 ~]# multipath -l

2mpathd (360060e80072be00000302be000000280) dm-10 HITACHI ,OPEN-V

3size=30G features='0' hwhandler='0' wp=rw

4`-+- policy='service-time 0' prio=0 status=active

5 |- 7:0:0:3 sdf 8:80 active undef running

6 |- 7:0:1:3 sdj 8:144 active undef running

7 |- 8:0:0:3 sdn 8:208 active undef running

8 `- 8:0:1:3 sdr 65:16 active undef running

9mpathc (360060e80072be00000302be00000027f) dm-9 HITACHI ,OPEN-V

10size=200G features='0' hwhandler='0' wp=rw

11`-+- policy='service-time 0' prio=0 status=active

12 |- 7:0:0:2 sde 8:64 active undef running

13 |- 7:0:1:2 sdi 8:128 active undef running

14 |- 8:0:0:2 sdm 8:192 active undef running

15 `- 8:0:1:2 sdq 65:0 active undef running

16mpathb (360060e80072be00000302be00000027e) dm-8 HITACHI ,OPEN-V

17size=200G features='0' hwhandler='0' wp=rw

18`-+- policy='service-time 0' prio=0 status=active

19 |- 7:0:0:1 sdd 8:48 active undef running

20 |- 7:0:1:1 sdh 8:112 active undef running

21 |- 8:0:0:1 sdl 8:176 active undef running

22 `- 8:0:1:1 sdp 8:240 active undef running

23mpatha (360060e80072be00000302be00000027a) dm-7 HITACHI ,OPEN-V

24size=1000G features='0' hwhandler='0' wp=rw

25`-+- policy='service-time 0' prio=0 status=active

26 |- 7:0:0:0 sdc 8:32 active undef running

27 |- 7:0:1:0 sdg 8:96 active undef running

28 |- 8:0:0:0 sdk 8:160 active undef running

29 `- 8:0:1:0 sdo 8:224 active undef running

30[root@NSR-db1 ~]#

案例:查看硬盘的 WWID

使用以下命令来查看硬盘的 WWID:

1sudo udevadm info --query=all --name=/dev/sdX | grep ID_SERIAL

将 /dev/sdX 替换为您要查看的磁盘设备,例如 /dev/sda 或 /dev/sdb。该命令将打印出设备的所有属性,然后使用 grep 命令过滤出包含 ID_SERIAL 的行,从而找到设备的 WWID。

案例:查看状态 multipath -d -l

1-d dry run, do not create or update devmaps

1[root@NSR-db1 ~]# multipath -d -l

2mpathd (360060e80072be00000302be000000280) dm-10 HITACHI ,OPEN-V

3size=30G features='0' hwhandler='0' wp=rw

4`-+- policy='service-time 0' prio=0 status=active

5 |- 7:0:0:3 sdf 8:80 active undef running

6 |- 7:0:1:3 sdj 8:144 active undef running

7 |- 8:0:0:3 sdn 8:208 active undef running

8 `- 8:0:1:3 sdr 65:16 active undef running

9mpathc (360060e80072be00000302be00000027f) dm-9 HITACHI ,OPEN-V

10size=200G features='0' hwhandler='0' wp=rw

11`-+- policy='service-time 0' prio=0 status=active

12 |- 7:0:0:2 sde 8:64 active undef running

13 |- 7:0:1:2 sdi 8:128 active undef running

14 |- 8:0:0:2 sdm 8:192 active undef running

15 `- 8:0:1:2 sdq 65:0 active undef running

16mpathb (360060e80072be00000302be00000027e) dm-8 HITACHI ,OPEN-V

17size=200G features='0' hwhandler='0' wp=rw

18`-+- policy='service-time 0' prio=0 status=active

19 |- 7:0:0:1 sdd 8:48 active undef running

20 |- 7:0:1:1 sdh 8:112 active undef running

21 |- 8:0:0:1 sdl 8:176 active undef running

22 `- 8:0:1:1 sdp 8:240 active undef running

23mpatha (360060e80072be00000302be00000027a) dm-7 HITACHI ,OPEN-V

24size=1000G features='0' hwhandler='0' wp=rw

25`-+- policy='service-time 0' prio=0 status=active

26 |- 7:0:0:0 sdc 8:32 active undef running

27 |- 7:0:1:0 sdg 8:96 active undef running

28 |- 8:0:0:0 sdk 8:160 active undef running

29 `- 8:0:1:0 sdo 8:224 active undef running

30[root@NSR-db1 ~]#

4、==工作实战==

这个是经过工作实际测试过的。

实战:multipath配置-2023.12.5(测试成功)

环境:

1centos7.9

默认情况下,linux是没安装multipath服务的,需要我们手动安装。

1、安装

- 安装multipath服务

1yum install device-mapper-multipath

⚠️ 注意:

注意:自己经实际测试,这里是不用将多路径软件添加至内核模块中,默认会自动添加的(这里仅做记录)

1[root@docker ~]#lsmod |grep multipath

2dm_multipath 27792 0

3dm_mod 128595 10 dm_multipath,dm_log,dm_mirror

2、配置

- 安装multipath软件后的现象

1[root@docker ~]#multipath -ll

2Dec 05 08:41:19 | DM multipath kernel driver not loaded

3Dec 05 08:41:19 | /etc/multipath.conf does not exist, blacklisting all devices.

4Dec 05 08:41:19 | A default multipath.conf file is located at

5Dec 05 08:41:19 | /usr/share/doc/device-mapper-multipath-0.4.9/multipath.conf

6Dec 05 08:41:19 | You can run /sbin/mpathconf --enable to create

7Dec 05 08:41:19 | /etc/multipath.conf. See man mpathconf(8) for more details

8Dec 05 08:41:19 | DM multipath kernel driver not loaded

9[root@docker ~]#

- 继续处理,我们来看下提示文件

/usr/share/doc/device-mapper-multipath-0.4.9/multipath.conf的内容

1[root@docker ~]#cat /usr/share/doc/device-mapper-multipath-0.4.9/multipath.conf

2# This is a basic configuration file with some examples, for device mapper

3# multipath.

4#

5# For a complete list of the default configuration values, run either

6# multipath -t

7# or

8# multipathd show config

9#

10# For a list of configuration options with descriptions, see the multipath.conf

11# man page

12

13### By default, devices with vendor = "IBM" and product = "S/390.*" are

14### blacklisted. To enable mulitpathing on these devies, uncomment the

15### following lines.

16#blacklist_exceptions {

17# device {

18# vendor "IBM"

19# product "S/390.*"

20# }

21#}

22

23### Use user friendly names, instead of using WWIDs as names.

24defaults {

25 user_friendly_names yes

26 find_multipaths yes

27}

28###

29### Here is an example of how to configure some standard options.

30###

31#

32#defaults {

33# polling_interval 10

34# path_selector "round-robin 0"

35# path_grouping_policy multibus

36# uid_attribute ID_SERIAL

37# prio alua

38# path_checker readsector0

39# rr_min_io 100

40# max_fds 8192

41# rr_weight priorities

42# failback immediate

43# no_path_retry fail

44# user_friendly_names yes

45#}

46###

47### The wwid line in the following blacklist section is shown as an example

48### of how to blacklist devices by wwid. The 2 devnode lines are the

49### compiled in default blacklist. If you want to blacklist entire types

50### of devices, such as all scsi devices, you should use a devnode line.

51### However, if you want to blacklist specific devices, you should use

52### a wwid line. Since there is no guarantee that a specific device will

53### not change names on reboot (from /dev/sda to /dev/sdb for example)

54### devnode lines are not recommended for blacklisting specific devices.

55###

56#blacklist {

57# wwid 26353900f02796769

58# devnode "^(ram|raw|loop|fd|md|dm-|sr|scd|st)[0-9]*"

59# devnode "^hd[a-z]"

60#}

61#multipaths {

62# multipath {

63# wwid 3600508b4000156d700012000000b0000

64# alias yellow

65# path_grouping_policy multibus

66# path_selector "round-robin 0"

67# failback manual

68# rr_weight priorities

69# no_path_retry 5

70# }

71# multipath {

72# wwid 1DEC_____321816758474

73# alias red

74# }

75#}

76#devices {

77# device {

78# vendor "COMPAQ "

79# product "HSV110 (C)COMPAQ"

80# path_grouping_policy multibus

81# path_checker readsector0

82# path_selector "round-robin 0"

83# hardware_handler "0"

84# failback 15

85# rr_weight priorities

86# no_path_retry queue

87# }

88# device {

89# vendor "COMPAQ "

90# product "MSA1000 "

91# path_grouping_policy multibus

92# }

93#}

(默认multipath文件已经存在系统上了,需要我们使用以下命令来启用它)

1[root@docker ~]#/sbin/mpathconf --enable

我们再次看下multipath的配置文件:(==本次为最小化配置==)

1[root@docker ~]#cat /etc/multipath.conf

2……

3defaults {

4 user_friendly_names yes

5 find_multipaths yes

6}

7……

3、启动并查看multipath服务

1[root@docker ~]#systemctl enable multipathd --now

2[root@docker ~]#systemctl status multipathd

3● multipathd.service - Device-Mapper Multipath Device Controller

4 Loaded: loaded (/usr/lib/systemd/system/multipathd.service; enabled; vendor preset: enabled)

5 Active: active (running) since 二 2023-12-05 08:45:24 CST; 1s ago

6 Process: 16025 ExecStart=/sbin/multipathd (code=exited, status=0/SUCCESS)

7 Process: 16022 ExecStartPre=/sbin/multipath -A (code=exited, status=0/SUCCESS)

8 Process: 16020 ExecStartPre=/sbin/modprobe dm-multipath (code=exited, status=0/SUCCESS)

9 Main PID: 16028 (multipathd)

10 Tasks: 6

11 Memory: 2.2M

12 CGroup: /system.slice/multipathd.service

13 └─16028 /sbin/multipathd

14

1512月 05 08:45:24 docker systemd[1]: Starting Device-Mapper Multipath Device Controller...

1612月 05 08:45:24 docker systemd[1]: Started Device-Mapper Multipath Device Controller.

1712月 05 08:45:24 docker multipathd[16028]: path checkers start up

18

19

20

21###重启服务命令

22##systemctl restart multipathd

23

24##centos6用以下方式重启服务

25##/etc/init.d/multipathd restart

- centos6 用以下方式

1查看启动级别

2chkconfig --list|grep multipathd

3multipathd 0:off 1:off 2:off 3:off 4:off 5:off 6:off

4

5配置启动级别

6chkconfig --level 2345 multipathd on

7chkconfig --list|grep multipathd

8multipathd 0:off 1:off 2:on 3:on 4:on 5:on 6:off

4、验证多路径

1[root@docker ~]#multipath -ll

如果出现“ACTIVE”状态的磁盘,则意味着Multipath已经成功地配置并且工作正常。

⚠️ 注意:

以下2点仅做记录,自己测试无需配置以下2点,multipath服务也是可以正常生效的。

更新Initramfs:

在修改了Multipath配置之后,可能需要更新Initramfs以确保内核在启动时正确加载Multipath设置:

1sudo dracut -f重启系统:

为了应用所有更改,最好重新启动系统:

1sudo reboot

5、交付前信息收集

- 2台机器信息

- node1

1[root@NSR-db1 ~]# lsblk

2NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

3sda 8:0 1 447G 0 disk

4├─sda1 8:1 1 1000M 0 part /boot

5└─sda2 8:2 1 446G 0 part

6 ├─sys_vg00-root_lv00 253:0 0 100G 0 lvm /

7 ├─sys_vg00-swap_lv00 253:1 0 64G 0 lvm [SWAP]

8 ├─sys_vg00-usr_lv00 253:2 0 10G 0 lvm /usr

9 ├─sys_vg00-opt_lv00 253:3 0 20G 0 lvm /opt

10 ├─sys_vg00-tmp_lv00 253:4 0 10G 0 lvm /tmp

11 ├─sys_vg00-var_lv00 253:5 0 50G 0 lvm /var

12 └─sys_vg00-home_lv00 253:6 0 100G 0 lvm /home

13sdb 8:16 1 2.2T 0 disk

14└─sdb1 8:17 1 2.2T 0 part /data1

15sdc 8:32 0 1000G 0 disk

16└─mpatha 253:7 0 1000G 0 mpath

17sdd 8:48 0 200G 0 disk

18└─mpathb 253:8 0 200G 0 mpath

19sde 8:64 0 200G 0 disk

20└─mpathc 253:9 0 200G 0 mpath

21sdf 8:80 0 30G 0 disk

22└─mpathd 253:10 0 30G 0 mpath

23sdg 8:96 0 1000G 0 disk

24└─mpatha 253:7 0 1000G 0 mpath

25sdh 8:112 0 200G 0 disk

26└─mpathb 253:8 0 200G 0 mpath

27sdi 8:128 0 200G 0 disk

28└─mpathc 253:9 0 200G 0 mpath

29sdj 8:144 0 30G 0 disk

30└─mpathd 253:10 0 30G 0 mpath

31sdk 8:160 0 1000G 0 disk

32└─mpatha 253:7 0 1000G 0 mpath

33sdl 8:176 0 200G 0 disk

34└─mpathb 253:8 0 200G 0 mpath

35sdm 8:192 0 200G 0 disk

36└─mpathc 253:9 0 200G 0 mpath

37sdn 8:208 0 30G 0 disk

38└─mpathd 253:10 0 30G 0 mpath

39sdo 8:224 0 1000G 0 disk

40└─mpatha 253:7 0 1000G 0 mpath

41sdp 8:240 0 200G 0 disk

42└─mpathb 253:8 0 200G 0 mpath

43sdq 65:0 0 200G 0 disk

44└─mpathc 253:9 0 200G 0 mpath

45sdr 65:16 0 30G 0 disk

46└─mpathd 253:10 0 30G 0 mpath

47[root@NSR-db1 ~]#

48

49

50[root@NSR-db1 ~]# multipath -ll

51mpathd (360060e80072be00000302be000000280) dm-10 HITACHI ,OPEN-V

52size=30G features='0' hwhandler='0' wp=rw

53`-+- policy='service-time 0' prio=1 status=active

54 |- 7:0:0:3 sdf 8:80 active ready running

55 |- 7:0:1:3 sdj 8:144 active ready running

56 |- 8:0:0:3 sdn 8:208 active ready running

57 `- 8:0:1:3 sdr 65:16 active ready running

58mpathc (360060e80072be00000302be00000027f) dm-9 HITACHI ,OPEN-V

59size=200G features='0' hwhandler='0' wp=rw

60`-+- policy='service-time 0' prio=1 status=active

61 |- 7:0:0:2 sde 8:64 active ready running

62 |- 7:0:1:2 sdi 8:128 active ready running

63 |- 8:0:0:2 sdm 8:192 active ready running

64 `- 8:0:1:2 sdq 65:0 active ready running

65mpathb (360060e80072be00000302be00000027e) dm-8 HITACHI ,OPEN-V

66size=200G features='0' hwhandler='0' wp=rw

67`-+- policy='service-time 0' prio=1 status=active

68 |- 7:0:0:1 sdd 8:48 active ready running

69 |- 7:0:1:1 sdh 8:112 active ready running

70 |- 8:0:0:1 sdl 8:176 active ready running

71 `- 8:0:1:1 sdp 8:240 active ready running

72mpatha (360060e80072be00000302be00000027a) dm-7 HITACHI ,OPEN-V

73size=1000G features='0' hwhandler='0' wp=rw

74`-+- policy='service-time 0' prio=1 status=active

75 |- 7:0:0:0 sdc 8:32 active ready running

76 |- 7:0:1:0 sdg 8:96 active ready running

77 |- 8:0:0:0 sdk 8:160 active ready running

78 `- 8:0:1:0 sdo 8:224 active ready running

79

80[root@NSR-db1 ~]# ls /dev/sd

81sda sda1 sda2 sdb sdb1 sdc sdd sde sdf sdg sdh sdi sdj sdk sdl sdm sdn sdo sdp sdq sdr

82[root@NSR-db1 ~]# ls /dev/sd

83

84

85[root@NSR-db1 ~]# fdisk -l

86

87Disk /dev/sda: 480.0 GB, 479952109568 bytes, 937406464 sectors

88Units = sectors of 1 * 512 = 512 bytes

89Sector size (logical/physical): 512 bytes / 512 bytes

90I/O size (minimum/optimal): 512 bytes / 512 bytes

91Disk label type: dos

92Disk identifier: 0x000d0e82

93

94 Device Boot Start End Blocks Id System

95/dev/sda1 * 2048 2050047 1024000 83 Linux

96/dev/sda2 2050048 937406463 467678208 8e Linux LVM

97WARNING: fdisk GPT support is currently new, and therefore in an experimental phase. Use at your own discretion.

98

99Disk /dev/sdb: 2394.4 GB, 2394444267520 bytes, 4676648960 sectors

100Units = sectors of 1 * 512 = 512 bytes

101Sector size (logical/physical): 512 bytes / 512 bytes

102I/O size (minimum/optimal): 512 bytes / 512 bytes

103Disk label type: gpt

104Disk identifier: C94C497D-46C4-47D8-85B6-3C28768F5470

105

106

107# Start End Size Type Name

108 1 34 4676647007 2.2T Microsoft basic primary

109

110Disk /dev/mapper/sys_vg00-root_lv00: 107.4 GB, 107374182400 bytes, 209715200 sectors

111Units = sectors of 1 * 512 = 512 bytes

112Sector size (logical/physical): 512 bytes / 512 bytes

113I/O size (minimum/optimal): 512 bytes / 512 bytes

114

115

116Disk /dev/mapper/sys_vg00-swap_lv00: 68.7 GB, 68719476736 bytes, 134217728 sectors

117Units = sectors of 1 * 512 = 512 bytes

118Sector size (logical/physical): 512 bytes / 512 bytes

119I/O size (minimum/optimal): 512 bytes / 512 bytes

120

121

122Disk /dev/mapper/sys_vg00-usr_lv00: 10.7 GB, 10737418240 bytes, 20971520 sectors

123Units = sectors of 1 * 512 = 512 bytes

124Sector size (logical/physical): 512 bytes / 512 bytes

125I/O size (minimum/optimal): 512 bytes / 512 bytes

126

127

128Disk /dev/mapper/sys_vg00-opt_lv00: 21.5 GB, 21474836480 bytes, 41943040 sectors

129Units = sectors of 1 * 512 = 512 bytes

130Sector size (logical/physical): 512 bytes / 512 bytes

131I/O size (minimum/optimal): 512 bytes / 512 bytes

132

133

134Disk /dev/mapper/sys_vg00-tmp_lv00: 10.7 GB, 10737418240 bytes, 20971520 sectors

135Units = sectors of 1 * 512 = 512 bytes

136Sector size (logical/physical): 512 bytes / 512 bytes

137I/O size (minimum/optimal): 512 bytes / 512 bytes

138

139

140Disk /dev/mapper/sys_vg00-var_lv00: 53.7 GB, 53687091200 bytes, 104857600 sectors

141Units = sectors of 1 * 512 = 512 bytes

142Sector size (logical/physical): 512 bytes / 512 bytes

143I/O size (minimum/optimal): 512 bytes / 512 bytes

144

145

146Disk /dev/mapper/sys_vg00-home_lv00: 107.4 GB, 107374182400 bytes, 209715200 sectors

147Units = sectors of 1 * 512 = 512 bytes

148Sector size (logical/physical): 512 bytes / 512 bytes

149I/O size (minimum/optimal): 512 bytes / 512 bytes

150

151

152Disk /dev/sdc: 1073.7 GB, 1073741824000 bytes, 2097152000 sectors

153Units = sectors of 1 * 512 = 512 bytes

154Sector size (logical/physical): 512 bytes / 512 bytes

155I/O size (minimum/optimal): 512 bytes / 512 bytes

156

157

158Disk /dev/sdd: 214.7 GB, 214748364800 bytes, 419430400 sectors

159Units = sectors of 1 * 512 = 512 bytes

160Sector size (logical/physical): 512 bytes / 512 bytes

161I/O size (minimum/optimal): 512 bytes / 512 bytes

162

163

164Disk /dev/sde: 214.7 GB, 214748364800 bytes, 419430400 sectors

165Units = sectors of 1 * 512 = 512 bytes

166Sector size (logical/physical): 512 bytes / 512 bytes

167I/O size (minimum/optimal): 512 bytes / 512 bytes

168

169

170Disk /dev/sdf: 32.2 GB, 32212254720 bytes, 62914560 sectors

171Units = sectors of 1 * 512 = 512 bytes

172Sector size (logical/physical): 512 bytes / 512 bytes

173I/O size (minimum/optimal): 512 bytes / 512 bytes

174

175

176Disk /dev/sdg: 1073.7 GB, 1073741824000 bytes, 2097152000 sectors

177Units = sectors of 1 * 512 = 512 bytes

178Sector size (logical/physical): 512 bytes / 512 bytes

179I/O size (minimum/optimal): 512 bytes / 512 bytes

180

181

182Disk /dev/sdh: 214.7 GB, 214748364800 bytes, 419430400 sectors

183Units = sectors of 1 * 512 = 512 bytes

184Sector size (logical/physical): 512 bytes / 512 bytes

185I/O size (minimum/optimal): 512 bytes / 512 bytes

186

187

188Disk /dev/sdi: 214.7 GB, 214748364800 bytes, 419430400 sectors

189Units = sectors of 1 * 512 = 512 bytes

190Sector size (logical/physical): 512 bytes / 512 bytes

191I/O size (minimum/optimal): 512 bytes / 512 bytes

192

193

194Disk /dev/sdj: 32.2 GB, 32212254720 bytes, 62914560 sectors

195Units = sectors of 1 * 512 = 512 bytes

196Sector size (logical/physical): 512 bytes / 512 bytes

197I/O size (minimum/optimal): 512 bytes / 512 bytes

198

199

200Disk /dev/sdk: 1073.7 GB, 1073741824000 bytes, 2097152000 sectors

201Units = sectors of 1 * 512 = 512 bytes

202Sector size (logical/physical): 512 bytes / 512 bytes

203I/O size (minimum/optimal): 512 bytes / 512 bytes

204

205

206Disk /dev/sdl: 214.7 GB, 214748364800 bytes, 419430400 sectors

207Units = sectors of 1 * 512 = 512 bytes

208Sector size (logical/physical): 512 bytes / 512 bytes

209I/O size (minimum/optimal): 512 bytes / 512 bytes

210

211

212Disk /dev/sdm: 214.7 GB, 214748364800 bytes, 419430400 sectors

213Units = sectors of 1 * 512 = 512 bytes

214Sector size (logical/physical): 512 bytes / 512 bytes

215I/O size (minimum/optimal): 512 bytes / 512 bytes

216

217

218Disk /dev/sdn: 32.2 GB, 32212254720 bytes, 62914560 sectors

219Units = sectors of 1 * 512 = 512 bytes

220Sector size (logical/physical): 512 bytes / 512 bytes

221I/O size (minimum/optimal): 512 bytes / 512 bytes

222

223

224Disk /dev/sdo: 1073.7 GB, 1073741824000 bytes, 2097152000 sectors

225Units = sectors of 1 * 512 = 512 bytes

226Sector size (logical/physical): 512 bytes / 512 bytes

227I/O size (minimum/optimal): 512 bytes / 512 bytes

228

229

230Disk /dev/sdp: 214.7 GB, 214748364800 bytes, 419430400 sectors

231Units = sectors of 1 * 512 = 512 bytes

232Sector size (logical/physical): 512 bytes / 512 bytes

233I/O size (minimum/optimal): 512 bytes / 512 bytes

234

235

236Disk /dev/sdq: 214.7 GB, 214748364800 bytes, 419430400 sectors

237Units = sectors of 1 * 512 = 512 bytes

238Sector size (logical/physical): 512 bytes / 512 bytes

239I/O size (minimum/optimal): 512 bytes / 512 bytes

240

241

242Disk /dev/sdr: 32.2 GB, 32212254720 bytes, 62914560 sectors

243Units = sectors of 1 * 512 = 512 bytes

244Sector size (logical/physical): 512 bytes / 512 bytes

245I/O size (minimum/optimal): 512 bytes / 512 bytes

246

247

248Disk /dev/mapper/mpatha: 1073.7 GB, 1073741824000 bytes, 2097152000 sectors

249Units = sectors of 1 * 512 = 512 bytes

250Sector size (logical/physical): 512 bytes / 512 bytes

251I/O size (minimum/optimal): 512 bytes / 512 bytes

252

253

254Disk /dev/mapper/mpathb: 214.7 GB, 214748364800 bytes, 419430400 sectors

255Units = sectors of 1 * 512 = 512 bytes

256Sector size (logical/physical): 512 bytes / 512 bytes

257I/O size (minimum/optimal): 512 bytes / 512 bytes

258

259

260Disk /dev/mapper/mpathc: 214.7 GB, 214748364800 bytes, 419430400 sectors

261Units = sectors of 1 * 512 = 512 bytes

262Sector size (logical/physical): 512 bytes / 512 bytes

263I/O size (minimum/optimal): 512 bytes / 512 bytes

264

265

266Disk /dev/mapper/mpathd: 32.2 GB, 32212254720 bytes, 62914560 sectors

267Units = sectors of 1 * 512 = 512 bytes

268Sector size (logical/physical): 512 bytes / 512 bytes

269I/O size (minimum/optimal): 512 bytes / 512 bytes

270

271[root@NSR-db1 ~]#

- node2

1[root@NSR-db2 ~]# lsblk

2NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

3sda 8:0 1 447G 0 disk

4├─sda1 8:1 1 1000M 0 part /boot

5└─sda2 8:2 1 446G 0 part

6 ├─sys_vg00-root_lv00 253:0 0 100G 0 lvm /

7 ├─sys_vg00-swap_lv00 253:1 0 64G 0 lvm [SWAP]

8 ├─sys_vg00-usr_lv00 253:2 0 10G 0 lvm /usr

9 ├─sys_vg00-opt_lv00 253:3 0 20G 0 lvm /opt

10 ├─sys_vg00-tmp_lv00 253:4 0 10G 0 lvm /tmp

11 ├─sys_vg00-var_lv00 253:5 0 50G 0 lvm /var

12 └─sys_vg00-home_lv00 253:6 0 100G 0 lvm /home

13sdb 8:16 1 3.3T 0 disk

14└─sdb1 8:17 1 3.3T 0 part /data1

15sdc 8:32 0 1000G 0 disk

16└─mpatha 253:7 0 1000G 0 mpath

17sdd 8:48 0 200G 0 disk

18└─mpathb 253:8 0 200G 0 mpath

19sde 8:64 0 200G 0 disk

20└─mpathc 253:9 0 200G 0 mpath

21sdf 8:80 0 30G 0 disk

22└─mpathd 253:10 0 30G 0 mpath

23sdg 8:96 0 1000G 0 disk

24└─mpatha 253:7 0 1000G 0 mpath

25sdh 8:112 0 200G 0 disk

26└─mpathb 253:8 0 200G 0 mpath

27sdi 8:128 0 200G 0 disk

28└─mpathc 253:9 0 200G 0 mpath

29sdj 8:144 0 30G 0 disk

30└─mpathd 253:10 0 30G 0 mpath

31sdk 8:160 0 1000G 0 disk

32└─mpatha 253:7 0 1000G 0 mpath

33sdl 8:176 0 200G 0 disk

34└─mpathb 253:8 0 200G 0 mpath

35sdm 8:192 0 200G 0 disk

36└─mpathc 253:9 0 200G 0 mpath

37sdn 8:208 0 30G 0 disk

38└─mpathd 253:10 0 30G 0 mpath

39sdo 8:224 0 1000G 0 disk

40└─mpatha 253:7 0 1000G 0 mpath

41sdp 8:240 0 200G 0 disk

42└─mpathb 253:8 0 200G 0 mpath

43sdq 65:0 0 200G 0 disk

44└─mpathc 253:9 0 200G 0 mpath

45sdr 65:16 0 30G 0 disk

46└─mpathd 253:10 0 30G 0 mpath

47[root@NSR-db2 ~]#

48

49

50[root@NSR-db2 ~]# multipath -ll

51mpathd (360060e80072be00000302be000000280) dm-10 HITACHI ,OPEN-V

52size=30G features='0' hwhandler='0' wp=rw

53`-+- policy='service-time 0' prio=1 status=active

54 |- 7:0:0:3 sdf 8:80 active ready running

55 |- 7:0:1:3 sdj 8:144 active ready running

56 |- 8:0:0:3 sdn 8:208 active ready running

57 `- 8:0:1:3 sdr 65:16 active ready running

58mpathc (360060e80072be00000302be00000027f) dm-9 HITACHI ,OPEN-V

59size=200G features='0' hwhandler='0' wp=rw

60`-+- policy='service-time 0' prio=1 status=active

61 |- 7:0:0:2 sde 8:64 active ready running

62 |- 7:0:1:2 sdi 8:128 active ready running

63 |- 8:0:0:2 sdm 8:192 active ready running

64 `- 8:0:1:2 sdq 65:0 active ready running

65mpathb (360060e80072be00000302be00000027e) dm-8 HITACHI ,OPEN-V

66size=200G features='0' hwhandler='0' wp=rw

67`-+- policy='service-time 0' prio=1 status=active

68 |- 7:0:0:1 sdd 8:48 active ready running

69 |- 7:0:1:1 sdh 8:112 active ready running

70 |- 8:0:0:1 sdl 8:176 active ready running

71 `- 8:0:1:1 sdp 8:240 active ready running

72mpatha (360060e80072be00000302be00000027a) dm-7 HITACHI ,OPEN-V

73size=1000G features='0' hwhandler='0' wp=rw

74`-+- policy='service-time 0' prio=1 status=active

75 |- 7:0:0:0 sdc 8:32 active ready running

76 |- 7:0:1:0 sdg 8:96 active ready running

77 |- 8:0:0:0 sdk 8:160 active ready running

78 `- 8:0:1:0 sdo 8:224 active ready running

79[root@NSR-db2 ~]#

说明

这个只是一个简单的multipath服务配置,具体配置细节会由业务dba去配置的,这里只是检查底层zone配置过来的硬盘路径是否一致。

另外,需要注意的是:

本次是先做了存储后端lun映射操作,然后配置了zone后,服务器就立马识别到了后端存储的哦!

参考

https://www.python100.com/html/MT38R0OB557Y.html

https://blog.csdn.net/m0_61069946/article/details/131043755

关于我

我的博客主旨:

- 排版美观,语言精炼;

- 文档即手册,步骤明细,拒绝埋坑,提供源码;

- 本人实战文档都是亲测成功的,各位小伙伴在实际操作过程中如有什么疑问,可随时联系本人帮您解决问题,让我们一起进步!

🍀 微信二维码 x2675263825 (舍得), qq:2675263825。

🍀 微信公众号 《云原生架构师实战》

🍀 个人博客站点

🍀 语雀

https://www.yuque.com/xyy-onlyone

🍀 csdn

https://blog.csdn.net/weixin_39246554?spm=1010.2135.3001.5421

🍀 知乎

https://www.zhihu.com/people/foryouone

最后

好了,关于本次就到这里了,感谢大家阅读,最后祝大家生活快乐,每天都过的有意义哦,我们下期见!